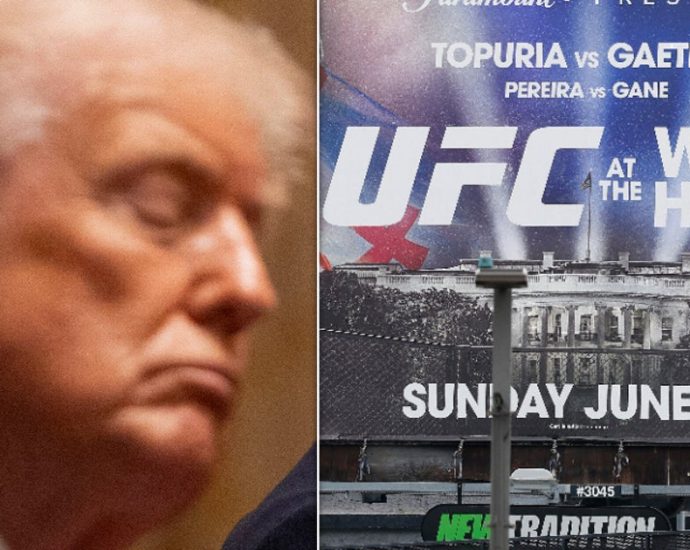

Comedian Tricks MAGA Loyalists Into Revealing Blatant Hypocrisy

June is, of course, Pride Month, and one political satirist is taking pride in how he mocked the hypocrisy of MAGA members about the month intended to celebrate the LGTBQ+ community. Comedian Walter Masterson recently attended a MAGA rally in Florida and immediately started trolling Traitor 47 supporters by gripingContinue Reading